One of the latest scandals in science is the shoddy research of Brian Wansink, with new scrutiny on his papers resulting in many of them being revised or withdrawn. Apparently this started in the aftermath of a post on his blog titled The Grad Student Who Never Said No. I bring this up because it ties into a previous post of mine, The Fundamental Principle of Science. But the entire blog post is very interesting so let’s look at it.

A PhD student from a Turkish university called to interview to be a visiting scholar for 6 months. Her dissertation was on a topic that was only indirectly related to our Lab’s mission, but she really wanted to come and we had the room, so I said “Yes.”

So far, no problems.

When she arrived, I gave her a data set of a self-funded, failed study which had null results (it was a one month study in an all-you-can-eat Italian restaurant buffet where we had charged some people ½ as much as others).

Right away you have a problem with whatever they’re trying to find out because there’s no realistic way to charge people half as much as others. Most people find out what a buffet costs before ordering it, with this influencing their choice to eat there or not. Further, many people will be regular customers and thus already know what the buffet costs.

I said, “This cost us a lot of time and our own money to collect. There’s got to be something here we can salvage because it’s a cool (rich & unique) data set.”

This is a really bad sign. If your experiment fails, you’re not supposed to torture the data until it tells you what you want to hear. This is called p-hacking, an it results in an awful lot of garbage. Virtually all data sets have some correlations in them by sheer chance; finding them is simply misleading.

I had three ideas for potential Plan B, C, & D directions (since Plan A had failed). I told her what the analyses should be and what the tables should look like. I then asked her if she wanted to do them.

Granted, this isn’t quite as bad as the approach where one uses a computer to generate hundreds or thousands of “hypotheses” and test them against the dataset to find one that will stick to the wall, but it’s a bad sign. This is such a bad practice, in fact, that some scientific journals are requiring hypotheses to be pre-registered to prevent people from doing this.

Every day she came back with puzzling new results,

This is a very bad sign. It’s a huge red flashing neon sign that your data set has a lot of randomness in it.

and every day we would scratch our heads, ask “Why,” and come up with another way to reanalyze the data with yet another set of plausible hypotheses.

Now this is just p-hacking, except without a computer. You could call it artisinal, hand-crafted p-hacking.

Eventually we started discovering solutions that help up regardless of how we pressure-tested them.

I’m actually kind of curious what he means here by “pressure-testing”. Actual pressure-testing is putting fluid into pipes at significantly higher pressures than the working system will be under to ensure that all of the joints are strong and have no leaks. Given that the data set has already been collected, I can’t think of an analog to that. Perhaps he meant throwing out the best data points to see if the rest still correlated?

I outlined the first paper, and she wrote it up, and every day for a month I told her how to rewrite it and she did.

What was going on that 30 rewrites were necessary? Perhaps this grad student just sucked at writing, but at some point one really should pick an idea and stick with it. I really doubt that thirtieth rewrite was much better than the 23rd or the 17th.

This happened with a second paper, and then a third paper

So we’re up to about 90 rewrites in 3 months? That’s is a lot of rewrites for papers about something as weak as tracking the behavior of people eating at a randomly discounted Italian buffet.

(which was one that was based on her own discovery while digging through the data).

This is pure snark, but I can’t resist: she learned to p-hack from the master.

At about this same time, I had a second data set that I thought was really cool that I had offered up to one of my paid post-docs (again, the woman from Turkey was an unpaid visitor). In the same way this same post-doc had originally declined to analyze the buffet data because they weren’t sure where it would be published, they also declined this second data set. They said it would have been a “side project” for them they didn’t have the personal time to do it.

It’s really interesting that we have no idea what the post-doc actually said. It’s possible that the post-doc was just being polite and came up with an excuse to avoid p-hacking. It’s also possible that the post-doc said that this seemed like p-hacking and Wansink interpreted that as trying to cover for not thinking that it was prestigious enough work.

But it’s also possible that someone who wanted to work with an apparent p-hacker like Wansink actually was concerned only with how prestigious a journal the p-hacked results could be published in.

Boundaries. I get it.

I strongly suspect that he doesn’t get boundaries. Most people who have to talk about them this way—saying that they respect other people’s boundaries—don’t. At least in the cases I’ve seen. People who respect boundaries do so as a matter of course. It’s a bit like how people who don’t stab others in the face with spoons don’t talk about it, they just do it.

Six months after arriving, the Turkish woman had one paper accepted, two papers with revision requests, and two others that were submitted (and were eventually accepted — see below).

P-hacking is far more productive than having to find real results. That’s why it’s so tempting.

In comparison, the post-doc left after a year (and also left academia) with 1/4 as much published (per month) as the Turkish woman.

Right, but how good was it?

I think the person was also resentful of the Turkish woman.

This could mean several things, depending on what he person actually said and meant when they declined to p-hack the buffet data set. If it was purely self-aggrandizement, then this becomes a valid criticism. If they were actually demuring from p-hacking, then the resentment makes a lot of sense since the Turkish woman made them look bad for standing on principle while others transgressed and didn’t get caught.

Balance and time management has its place, but sometimes it’s best to “Make hay while the sun shines.”

This part is certainly true. It’s rarely a good idea to disdain low hanging fruit. Unless it’s wax fruit, not real fruit.

About the third time a mentor hears a person say “No” to a research opportunity, a productive mentor will almost instantly give it to a second researcher — along with the next opportunity.

I really wonder what he thinks that the word “mentor” means. Whatever it is, it clearly doesn’t involve actually mentoring anyone. But don’t just pass over this, look at how glaring it is. The first half of the sentence, “About the third time a mentor hears a person say ‘No’ to a research opportunity”, is the setup for explaining how the mentor will then help the person to learn. Instead, the next three words are almost a contradiction in terms: “a productive mentor.” To mentor someone is to put time and energy into helping them learn. It’s the opposite of being productive. Craftsmen are productive. Mentors are supposed to be instructive. And then the rest of the sentence can be translated as, “…will just give up on the person.”

I think the word he was looking for was “foreman,” not “mentor”.

This second researcher might be less experienced, less well trained, from a lessor school, or from a lessor background, but at least they don’t waste time by saying “No” or “I’ll think about it.” They unhesitatingly say “Yes” — even if they are not exactly sure how they’ll do it.

Yeah, the word he was looking for was “foreman”.

Facebook, Twitter, Game of Thrones, Starbucks, spinning class . . . time management is tough when there’s so many other shiny alternatives that are more inviting than writing the background section or doing the analyses for a paper.

I’ve got to say: if the reason that the post-doc wouldn’t p-hack the buffet data set was because they were too busy checking Facebook and Twitter, watching Game of Thrones, sitting chai lattes at Starbucks, and going to spinning class… that was actually a better use of time.

Yet most of us will never remember what we read or posted on Twitter or Facebook yesterday. In the meantime, this Turkish woman’s resume will always have the five papers below.

Ironically, Wansink is likely to remember this blog post for a long time, since it drew attention to his p-hacking. And at this point, there’s a lot of it.

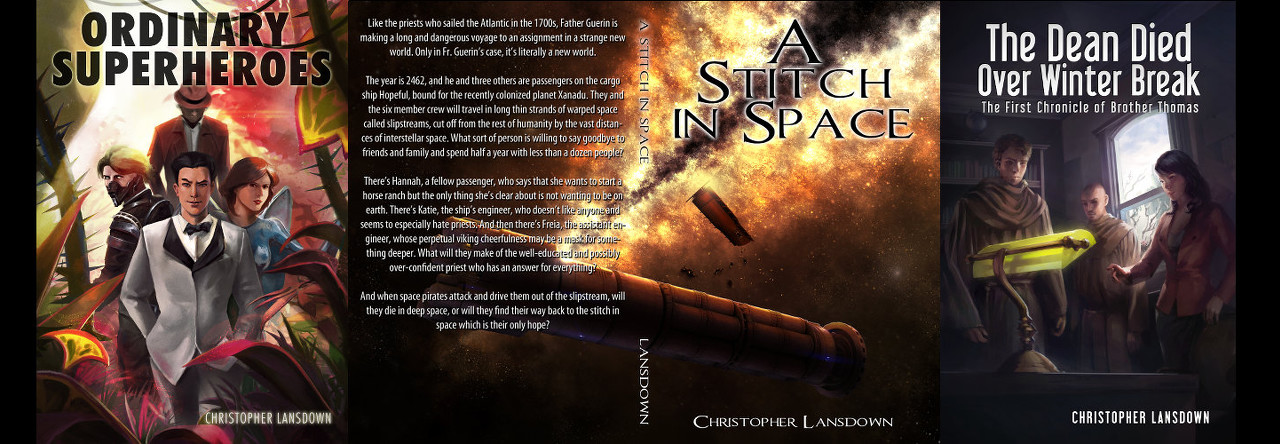

Discover more from Chris Lansdown

Subscribe to get the latest posts sent to your email.

Pingback: History Suffers From Academia – Chris Lansdown