On the thirteenth day of October in the year of our Lord 1985, the third episode of the second season of Murder, She Wrote aired. Titled Murder in the Afternoon, it’s set in New York City. (Last week’s episode was Joshua Peabody Died Here… Possibly.

The scene opens with Felix, sitting at his desk, writing. After a few moments, a woman walks in.

She walks over as seductive music plays and complains that he’s taking too long. When he says he just needs to finish the page he’s working on, she whispers something in his ear and slinks off, shutting the door behind her.

As he’s finishing, the door opens again and a mysterious figure dressed all in black creeps in.

As Flex begs for his life, the figure takes aim and shoots.

He clutches his arm and sinks to the floor.

We then hear someone yell “cut!” and a director storms over to ask him why he did the scene wrong—he was supposed to be shot in the stomach. It turns out that he’s an actor named Martin Grattop and this is a soap opera. And he did it wrong because he refuses to cooperate with being killed off.

The show runner, Joyce Holleran, then comes in.

She’s new to the show and has created the “Pittsfield Avenger,” who has been killing off characters from the show, which has boosted ratings significantly, earning her the appreciation of the Network.

Into this argument, Julian Tenley—an actor who has been on the show for decades—sticks his nose and tells Joyce that changes like these should be woven in over the course of months.

No one listens to him, though they are polite to him.

Joyce then storms back to her office where she complains to one of the writers—or perhaps the only writer, Carol.

Joyce tells her how Martin intentionally ruined the take and refuses to play the scene as written, so they’ll need to redo the hospital scene, but keep the new character—the orderly. It was a terrific introductory scene that the Carol wrote.

Another actor on the show, Todd, comes in on the end of the conversation (right after Joyce asked if she’d left her keys in Carol’s office).

Unlike everyone else, he wants to leave the show because he’s getting extremely good offers from Hollywood.

Joyce, of course, doesn’t care, and won’t let him out of his contract.

We then meet the reason that Jessica is going to be in this episode: her niece, Nita Cochrane.

She’s asking Joyce for assurance that she won’t be removed from the show. (While Joyce hasn’t decided who the Pittsfield Avenger will be, Nita has been playing the character, whose face has never been visible.) She explains that she needs the money from steady employment to take care of her ill mother.

Which is a bit absurd—acting is not the profession to enter if you want steady employment or have dependents. It’s an incredibly volatile business, full of ups and downs, and to paraphrase one of the great Robin Hood movies: sometimes the ups outnumber the downs, but not in Tinseltown. Not for the overwhelming majority of actors, anyway.

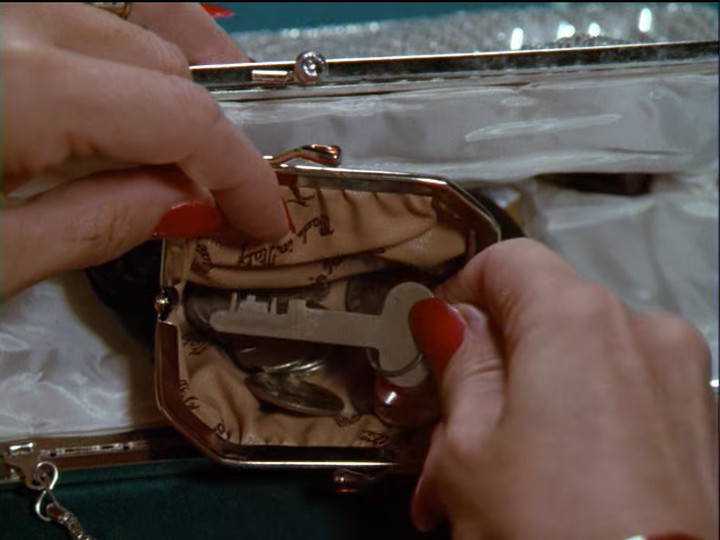

In the middle of this, Joyce, who isn’t paying much attention, finds her keys:

She even remarks, “Here they are. How in the world did they get here?” (I assume this means that someone took them for a while to have them copied and this will be revealed in the last 10 minutes of the episode.)

Joyce is unmoved by Nita’s speech and (correctly) points out that if Nita wants job security she’s in the wrong business. Then Joyce leaves.

We then cut to a nursing home:

As we get this establishing shot, we hear a woman’s voice say, “Making my sweet little Nita the Pittsfield Avenger would be utter folly.”

Then we meet the owner of the voice.

Her name is Agnes and she fills Jessica in on the various ways in which Joyce is ruining the show Nita is in. She also describes who some of the characters are by recounting the absurd plotlines they’ve been in. (Absurd plotlines are a staple of daytime soap operas.)

Nita then comes in saying that she swears she could kill Joyce. We then find out Agnes is her grandmother while Jessica is her aunt. Since Anges is, presumably, not Jessica’s mother, I guess she must be the mother of whichever parent isn’t related to Jessica. We don’t actually get this explained, or why Jessica is visiting her, though.

After a bit more conversation, Nita walks Jessica somewhere and explains that she’s worried because if she turns out to be the avenger, she’ll eventually be written out of the show. She doesn’t explain why, though. Soap operas are famous for their convoluted plots that constantly bring characters back from the dead; it would hardly be impossible for someone who turned out to be a mass murderer to show up again.

And, of course, the writers can always write in an identical twin. From what I’ve heard, the number of identical twins in soap operas is extraordinary.

Anyway, Nita brings Jessica to the set of the show, for some reason. There, we meet two of the actors, Herbert Upton (left) and Bibi Hartman (center), and Joyce’s husband, Larry Hollaran (right):

In the small talk we discover that Herb plays a detective on the show and is so far into character that last year, when Larry shot a burglar in his apartment, Herb wanted to handle the case himself. Herb also turns out to be yet another person who’s read all of Jessica’s books.

Larry excuses himself and asks Bibi to come with him, and the firearms manager comes and takes the gun which Herb is wearing to go lock it in the prop cabinet since they won’t be using it any more today. It’s a little strange that they let him wander around with it, but maybe firearms were dealt with more casually on sets in the mid 1980s.

Jessica and Nita wander around a bit and Jessica tells Nita that she came into some money she didn’t expect from foreign sales of her books and wants to dedicate this to Agnes’ care. Nita refuses, saying she wants to pay for it herself. Then Julian comes over and Nita introduces them. They talk a bit and he expresses tremendous affection for the whole cast, calling them his family. After he excuses himself because he’ll be no good for surgery the next day if he doesn’t get some sleep, Nita explains that he’s been the heart of the show for thirty years, and the only concession to his age is the teleprompters, and he’s so good you’d never know he was reading his lines.

Later that night, we get an establishing shot of a building:

Then we seeJoyce is at her typewriter in her apartment.

Her husband walks in and tries to interest her in spending time with him, but she only wants to work. She suggests he gives Bibi a call if he’s bored—Bibi could break the monotony for him. He tries to tell her that she’s the only one for him, but she’s not interested. He says that he’ll go to the Friar’s club and she suggests that he does.

As he walks off she adds that he better be there because she may call him later and if he’s not there she may be forced to cut his allowance off.

He walks off without replying.

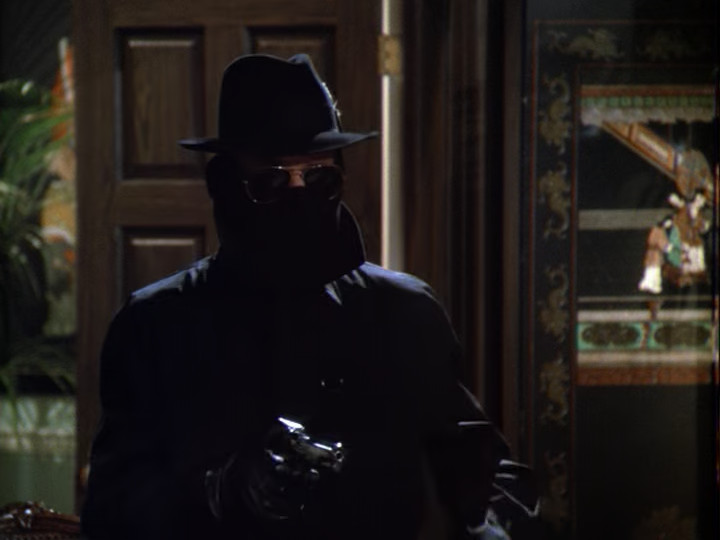

Then the Pittsfield Avenger comes in…

…and shoots Joyce.

The avenger then steals some papers off of Joyce’s desk and leaves.

Then we fade to black and go to commercial. Had you been watching in 1985, you might have seen a commercial like this:

When we get back, after an establishing shot of a building in the day…

…we see Jessica getting ready to leave her apartment.

I mention these establishing shots because, though it’s easy to ignore them, they’re so important to the atmosphere of Murder, She Wrote. They give us a sense of where in the world we are. If all we got were interior shots, we could manage, but would probably feel a bit lost. Consider Joyce shortly before she was murdered:

Without the exterior shot, the first thing we’d wonder when we saw this is, “where is this?” As we try to figure it out, and possibly get that wrong and correct our idea when we see more of the room and realize, “oh, that’s not an office,” we’d be missing the stuff that the scene is here to show us.

Anyway, as Jessica tries to leave she runs into a policeman. His name is Sergeant Kaplan of the homicide division (21st Precinct) and he’s here looking for Nita. He’s got a warrant for Nita’s arrest, and checks the hotel room in case Nita is hiding out there.

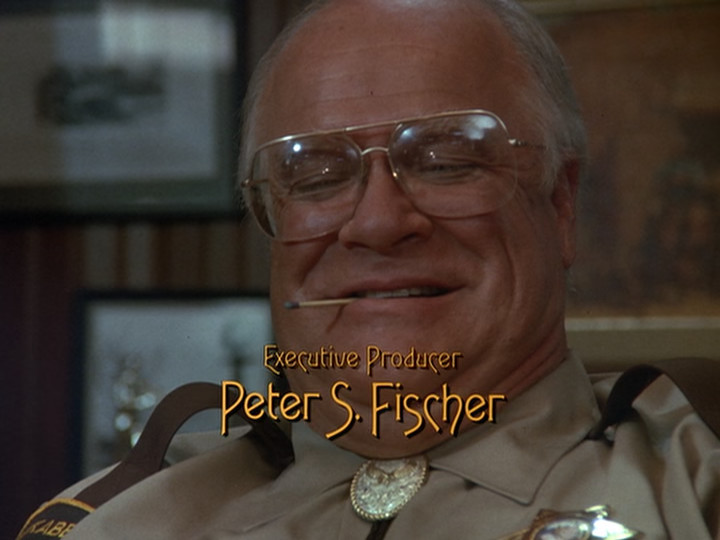

We then cut to the police station where we meet the Lieutenant in charge of the case:

His name is Lt. Antonelli and he’s questioning Larry (Joyce’s husband) about his whereabouts at the time of the killing.

Larry didn’t end up going to the Friar’s club because he was annoyed at Joyce and wanted to give her something to think about if she did call him there. Instead, he went bar hopping and doesn’t remember where. He doesn’t even remember checking into the hotel he woke up in. He then asks if he can go because he’s hung over and just found out his wife was murdered, and the Lt. tells him OK, go, but be someplace reachable.

Right after he leaves, Jessica arrives in the company of Sgt. Kaplan. She and the Lieutenant have a weird exchange where she says that Nita didn’t do it, Antonelli says that he doesn’t read her books, and Jessica says that he’s being grossly overpaid. Antonelli then shows her a photograph of the Pittsfield Avenger and Jessica points out that the entire point is that it’s a disguise and could have been anyone—and everyone had a motive. (Also, since Nita was the only one who wore the costume, it would have been a really stupid disguise for her to choose—which is a fair point.)

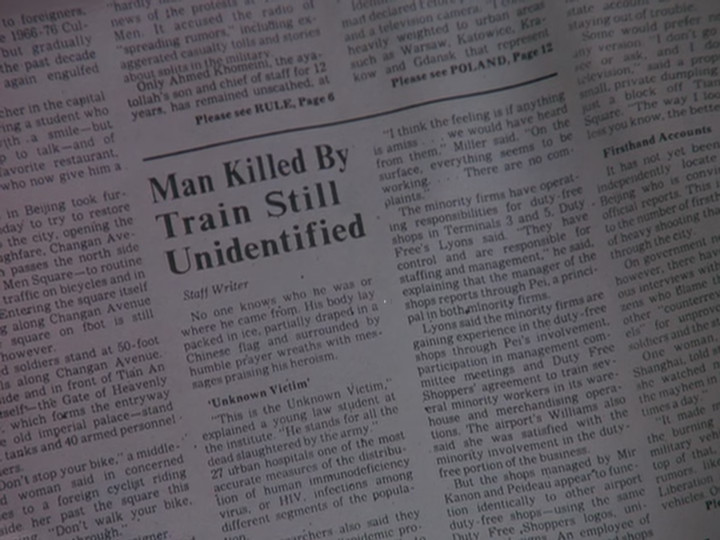

We also get from this conversation that the thing that Joyce was writing, which the Avenger took after shooting her, was a new series bible. (A “series bible” is a document containing the basic facts of a show.) Also, at 9:40pm, some guy by the name of Gordon LaMonica got a telephone call from Joyce that Nita was trying to kill her. The line then went dead and he called the police, who showed up ten minutes later. They found the telephone cord ripped from the wall, obviously caught up in the body when she fell down.

We then get a discussion between Gordon Lamonica, who has been promoted to producer now that Joyce is dead…

…and Carol (the writer) who has been promoted to head writer now that Joyce is dead.

They discuss some things related to the show—they’ll have to shut down production for the day of Joyce’s funeral, the network wants him to continue in the direction Joyce had been going, he’ll just edit around the guy pretending to be shot in the arm, and neither of them will miss most of the cast.

We then cut to Jessica and Agnes talking. Agnes figures that Martin is lying about the phone call to cover up his own guilt, but Jessica says that the killer was seen at 9:35 and police squad cars arrived at Joyce’s apartment and Gordon’s place less than ten minutes later. Agnes’ protestations that Nita didn’t do it no matter what the evidence suggests are cut short by the phone ringing—it’s Nita.

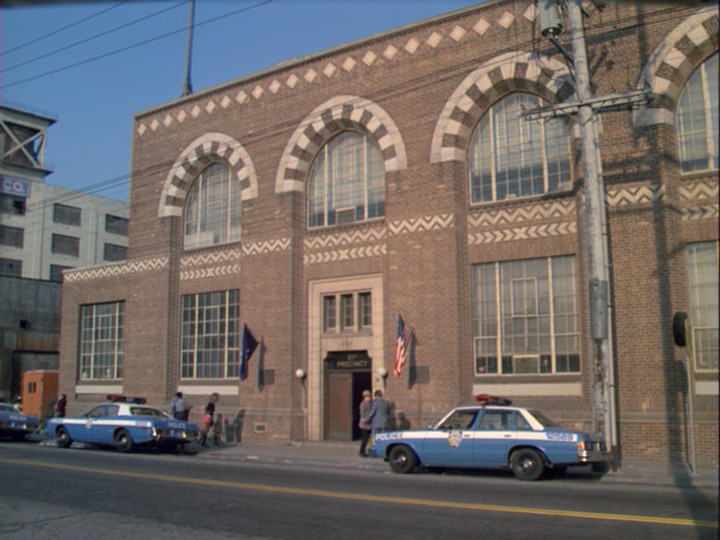

Jessica rushes over to where Nita says that she is in time to see Nita being arrested. Lt. Antonelli tells Jessica that he figured she might forget to tell him if Nita called so he ordered up some phone taps. Jessica tells him that they’re both looking for the same thing but Antonelli says he’s found what he’s looking for. He gets in his car and Jessica gets into the passenger seat, informing Antonelli that she’s riding with him to headquarters. Antonelli accepts this and drives off. We then get an establishing shot of the police station:

As Murder, She Wrote police stations go, this one is remarkably low-effort. There’s one sign saying “21st PRECINCT”, two flags, and two police cars. And you can’t actually read the sign saying “21st PRECINCT” on the DVD version. It’s visible in the blu-ray, but that’s not what people would have seen when the show aired on TV.

To be fair to Murder, She Wrote, though, the real 21st Precinct police station is not much higher effort. Here it is from Google Maps:

Anyway, we then go inside where Jessica is interrogating Nita, for some reason.

Nita tells the story of what happened, which wasn’t much. There were some new script pages messengered over (to where, we’re not told) and the way they read, they made it clear that Nita’s character would turn out to be the avenger. She she went to Joyce’s apartment to have it out with her. But while she was standing in front of the building gathering her courage, she saw the Pittsfield Avenger come out of the building. She had an awful premonition and went inside, but only got as far as the elevator before chickening out. Then she decided to call Joyce and went outside to look for a telephone. Then she heard sirens and saw an ambulance and realized something terrible had happened. She went back to her apartment. When she saw the police waiting for her there, she drove away and ended up driving half the night.

On her way out of the police station, Jessica runs into Herb and Bibi. They ask after Nita, then after the new script pages that had (apparently) been messengered to Nita, and whether they mentioned any other characters. Jessica coldly replies that Nita didn’t say and leaves. They follow her and Jessica tells them that Nita didn’t do it, so they give her their alibis. Herb was directing a little theater group at the time and Bibi was driving to visit her sister on Long Island, but her sister wasn’t home so she can’t prove it.

As Jessica leaves, Bibi tells her that she can believe what she wants, but everyone liked Nita. And she can believe what she wants, but everyone hated Joyce.

And on that bombshell, we fade to black and go to commercial.

When we come back, we’re at the studio. Julian walks down a medical-looking hall in his doctor’s outfit. He finds Gordon and tries to ask him about the scene where Nita confesses to being the Pittsfield Avenger, but Gordon doesn’t have time.

Julian then runs into Martin (the guy who plays Felix—the guy who got shot and ruined the shot by grabbing his arm instead of his stomach). It turns out that the TV series he was supposed to star in fell through and he has some ideas about how to keep his character alive. Julian wishes him luck but bitterly adds that Joyce’s pernicious influence seems to linger on.

The scene then shifts to an apartment building where Larry Holleran (Joyce’s husband) is looking for a taxi. Jessica happens to pull up in a taxi at just that moment and interrogates him about having said that he left the apartment at 9:30. She knows he didn’t—not unless he was dressed as the Pittsfield Avenger. She then offers to give him a ride to where he’s going and they can talk on the way, and Larry accepts.

Larry explains that he was with Bibi Hartman until three in the morning. He’s got a friend in the building who has an apartment and travels a lot, so he lets Larry use it. Jessica accuses him of the murder a few times and he denies it a few times, then he decides he should tell the police about where he really was before Jessica can and asks the cab driver to go to the “Manhattan Police Precinct.”

The scene then shifts to Carol’s office.

As the scene opens Todd is complaining to Carol that she can’t get him out of the show and she apologizes that Gordon is running things and he won’t let Todd out. As he storms off, Jessica walks in. She observes that it’s a strange situation—those who want to leave must stay, and those who want to stay must leave.

Carol figures that Jessica is there to find someone to give to the police in exchange for Nita. She admits to having a motive, but says that she didn’t do it. “Success isn’t all that great that I’m gonna climb over somebody else’s bones to get there.” (I like that line.)

Jessica has only 1 question—who did Joyce discuss her changes with? Carol says that Joyce never discussed her plans with her and also never consulted with the network. Jessica concludes that it must have been Gordon.

She finds Gordon and somehow guilts him into meeting with her to answer her questions. They arrange for that night at 8pm at “Barney’s on 49th Street” which is one of the show’s hangouts.

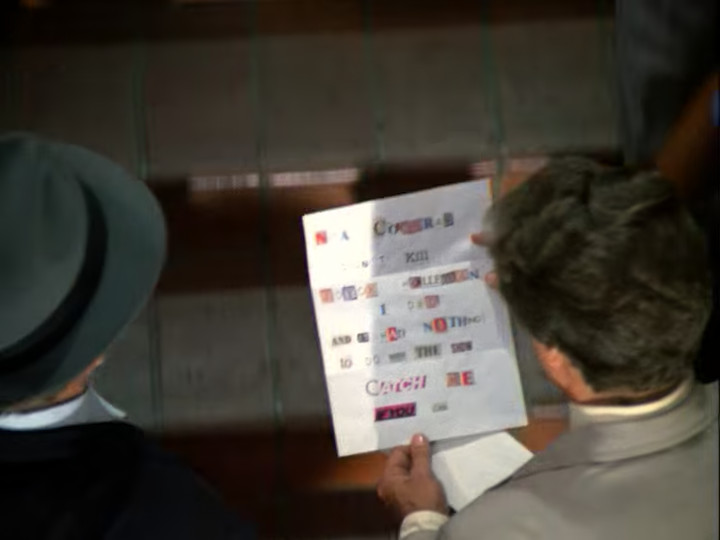

We then see Lt. Antonelli and Sgt. Kaplan walking up the stairs with a note they received:

It reads, “Nita Cochran didn’t kill Joyce Holleran. I did. And it had nothing to do with the show. Catch me if you can.” Antonelli doesn’t take it very seriously.

That night at Barney’s, Jessica comes to wait for Gordon and Bibi spots her. Bibi’s not happy with Jessica because she spent half the afternoon at the police station explaining that while Joyce Holleran was being killed, she was warming the sheets with Joyce’s husband in an apartment four stories down.

Jessica asks if she saw Gorden recently and she said that yes, they just had some drinks together, but Gordon got a phone call about more actor trouble and rushed off to the studio. Upon hearing this, Jessica rushes off to the studio, too.

At the studio, as Gordon is walking around the empty halls in the pitch dark, he hears voices. It turns out to be a recording of a conversation between most of the actors about how Gordon won’t change anything and they need to get rid of him. Then as Jessica shows up to the front and is stopped by a security guard, the Pittsfield avenger shows up and shoots Gordon. Jessica and the security guard rush in and find him clutching his arm.

And on that, we fade to black and go to commercial.

When we come back, we get an establishing shot of what I assume is meant to be a hospital, though it looks more like a fancy apartment building.

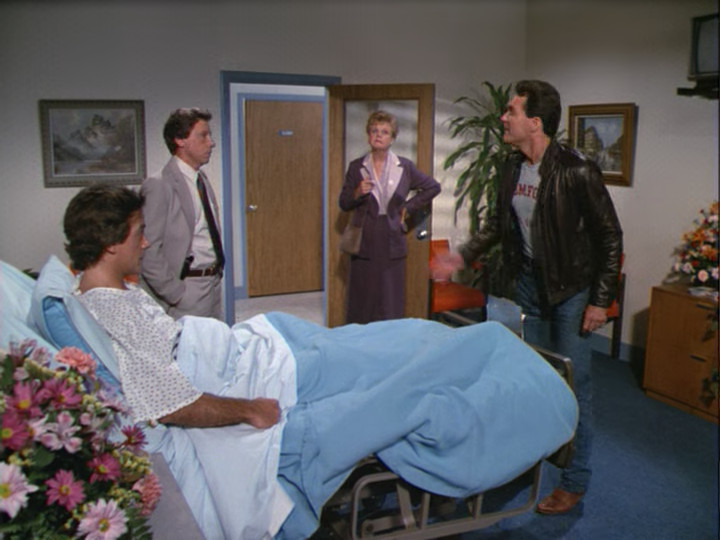

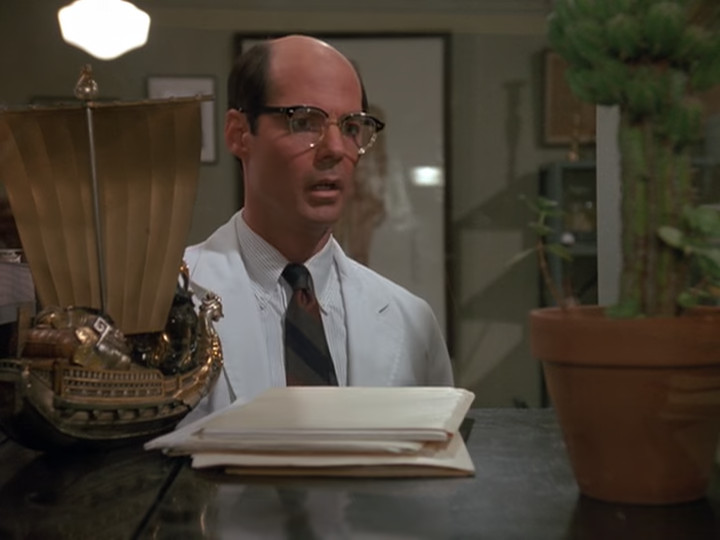

Inside the building Todd is in Gordon’s hospital room explaining how he meant something other than murder when he said that they needed to get rid of Gordon. Also present, it turns out, are Lt. Antonelli and Jessica:

I love how to prevent bare walls there are a bunch of framed paintings hanging up.

Just the kind of thing you’d find in hospital rooms.

Jessica interrupts to point out that if Todd had wanted to kill Gordon, he’d probably have actually killed him instead of shooting him in the arm then running away. The shooter had ample time for a second or even a third shot.

This is actually rather specious; nervous people are notoriously inaccurate with handguns even at close range. And they’re also fairly likely to assume they actually did hit their target, especially if they see the target act like he was just shot.

By contrast, playing a tape of himself threatening to kill the victim does sound remarkably unlikely if Todd was the shooter, and leaving it there after shooting Gordon sounds even less likely. For some reason Jessica doesn’t mention this.

Antonelli then gets a phone call from Kaplan and is informed that ballistics confirms that the bullet they dug out of Gordon’s arm matches the bullet that killed Joyce Holleran. As he tells Jessica this, he happens to mention that it’s a .38 caliber bullet. This startles Jessica, since the publicity still of the Pittsfield Avenger showed her holding a .45 automatic. The bullets, however, were from a “.38 police special”. (The actual round is called the .38 special; it happened to be commonly used by police but was also widely popular in the civilian population as well.)

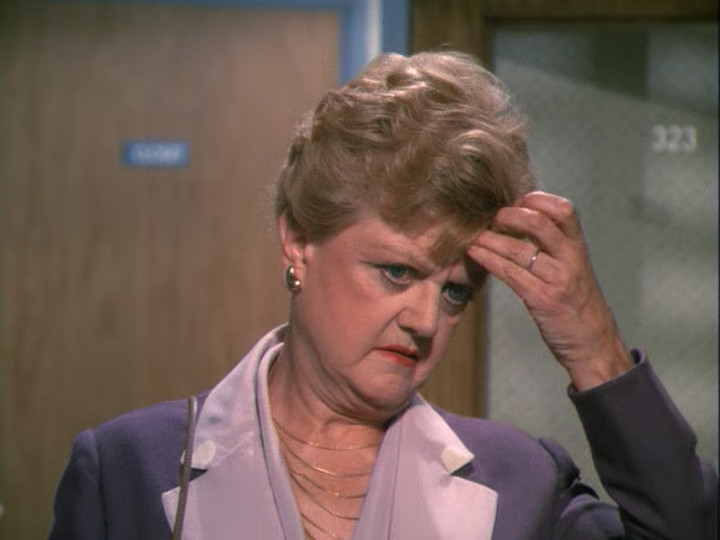

Anyway, this causes Jessica to think:

Jessica says that this changes everything, and asks Lt. Antonelli to come back with him to the studio.

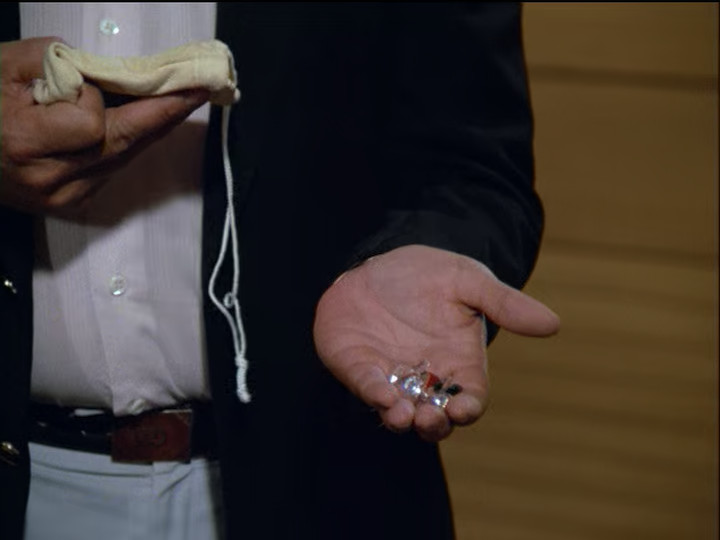

At the studio they inspect the prop guns with the prop master. The Avenger’s .45 automatic has been fired since it was last cleaned, which it was after the last scene shot with the Avenger. Also, its magazine (which they call a clip) is missing one blank. Also, the .38 that Herb’s character uses is missing.

Jessica then has an idea to trap the murderer where she works with Carol to queue some special lines up into the teleprompter. Then, during the taping of the next episode, Nita, in her character of Courtney, comes in the door from the storm “outside”.

She tells some story about escaping from the police in a laundry truck. She protests, “Dr. Goodman, I didn’t kill Felix. I didn’t! I don’t know where else to turn. Please help me.”

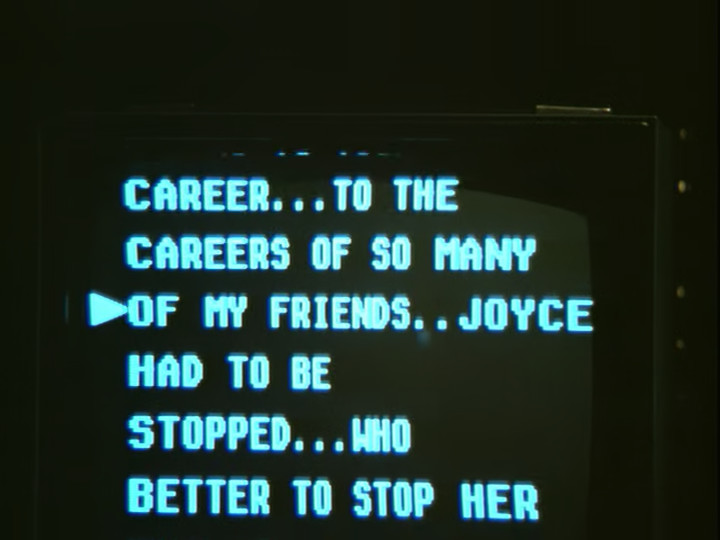

Dr. Goodman (Julian) then says that he won’t let the police prosecute Courtney (Nita) for something she didn’t do. He goes on to say that Felix deserved to die for what he did to her career, and then his speech starts melding into reality, talking about how Joyce was ruining everything. But the thing is, he’s reading off a teleprompter.

He goes on reading it, passionately explaining about it, and we get a bunch of reaction shots of people who seem to accept this as a confession. Except that even at the end, he’s still reading off the teleprompter.

After they cut, Julian asks, “Was that alright, Gordon? I could do it better.”

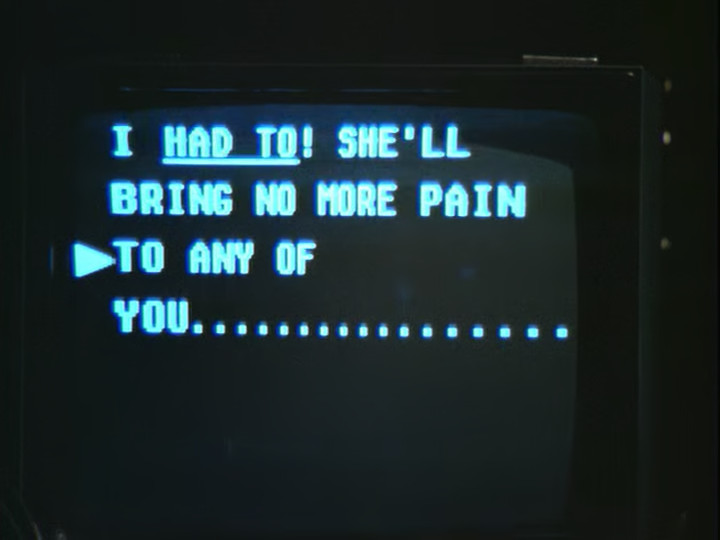

Jessica comes up with everyone else and casually asks Julian how he got into Joyce’s apartment since the front door was locked. Julian is completely unsurprised by this and explains that he made copies of Joyce’s keys—he took them in the morning and returned them in the afternoon. (Thus explaining why Joyce discovered her keys on her desk early on in the episode.)

She then asks him if he used the avenger’s gun to kill Joyce (she holds it up) and he confirms that he did. Jessica then explains that he didn’t actually kill her, because this wasn’t the gun that did it. This gun was filled with blanks. And, moreover, Joyce w as killed with a .38 police special, while the Avenger’s gun was a .45.

The real murderer—Larry Holleran—saw Julian enter the penthouse apartment disguised as the Pittsfield Avenger and followed him. When Julian pulled the trigger and the gun fired, Joyce wasn’t hit, she just fainted from shock. He got his gun while she was reviving. Once she called gordon and said that Nita had tried to kill her, Larry yanked the telephone cord and shot her. After she was dead, he wrapped the telephone cord around her to make it look like she pulled it when she fell.

When Larry objects that she has no proof, Jessica replies that it should be proof enough when the police compare the bullets from Joyce’s body and Gordon’s arm with the one from the burglar Larry shot last year.

Some uniformed officers come in and take Larry away. Lt. Antonelli then tells Jessica that was pretty slick and asks her how she knew that Larry used the same gun as on the burglar. Jessica replies that she doesn’t, but once he makes the comparisons they’ll know for sure. Antonelli then tells Jessica that the bullet from the burglary would have been thrown away months ago. Jessica replies that in that case, since Larry Holleran doesn’t know that, she suggests that he get Larry to confess as quickly as possible.

And on that, we freeze frame and go to credits.

This was something of a mixed episode. On the one hand, the setting—a daytime soap opera—is fun. On the other hand, a lot of things don’t make much sense in this episode. For example, why is Nita an actress if she needs steady income to support her grandmother? Acting is incredibly unreliable as a means of supporting dependents. And why doesn’t she know this? And what on earth is the family tree here? Why do we have no idea what Jessica’s relationship to Agnes is? Why do we have no idea why Nita has a South-African accent? All of this is left dangling and makes things a little confusing. To tell the truth, I’m not even sure why Agnes is in the story at all. The actress is fun, but it’s not a big part and doesn’t really add much to the story.

There are some other significantly loose ends, too. For example, why on earth are Joyce and Larry married when Joyce doesn’t even seem to like Larry? Why is Bibi with Larry? How does she even know him? Sure, the husband of a new show runner might visit the set once or twice, but that won’t give much of an opportunity for kindling a romance and besides, what’s Bibi’s motivation? Sleeping with your boss’s husband isn’t a way to help your career, so are we to assume that Bibi and Larry just have an amazing emotional connection? Is he supposed to be irresistibly attractive? But if so, why does no other woman act like he’s attractive?

The character of Julian is a bit weird, too. Most of the time he seems so addlepated that he almost feels senile. (The actor, Llyod Nolan, was 83 at the time of filming and actually died a few weeks before this episode aired, so it’s not clear how much of this was acting.) But he also seems to have executed some relatively complex plans. He stole Joyce’s keys and returned them without being noticed, and he also managed to get into the locked gun closet. Also, he stole and returned the Avengers costume without being noticed. This seems a bit complex for a guy who often barely knows where he is.

And then there’s the issue of how both Julian and Nita know Joyce’s home address. It wouldn’t be impossible to find it out, but it would certainly take some work and is the kind of thing that really needs an explanation. Especially for Nita, who would have had no reason to find it out.

Another open question is how on earth Larry got the tape of the cast discussing the need to eliminate Gordon since he’s no better than Joyce. It’s a bit weird that the cast had a meeting to discuss Gordon since they didn’t seem to generally talk with each other, and it’s even weirder that it happened in a way where Larry was able to record it with decent sound quality for all of the people speaking. Also, why did he do this? Why did he shoot Gordon? Was that merely as mis-direction? And why shoot Gordon in the arm rather than the heart? And why did he keep the murder weapon when it would link him to the crime?

Also, the timing of the events of the murder is a bit weird. It’s natural enough that Larry would notice Julian coming into his apartment dressed as the Pittsfield avenger right after he left Joyce’s room, but how did he even come close to having an alibi with Bibi? There’s no way she would have thought he was with her before Joyce was killed. I suppose she might not have cared—giving him an alibi also gave her an alibi—but this really should have been dealt with. And waiting for someone to meet you at a particular time is the kind of thing where you tend to check your watch. On the other hand, why was Bibi waiting for him? Moments before Julian came in Larry was trying to seduce Joyce. What was he planning to do if he succeeded? Or did he know he would fail and he just thought it was good to keep up appearances? But Joyce didn’t believe him so what did that matter?

Also, why did Bibi go home at 3am? At that point, it would have been way more convenient to just sleep in Larry’s friend’s apartment, and it’s not like the friend was coming back and they needed to vacate it in time. And where did Larry go? Did he in fact go to a hotel at 3 in the morning? If so, why? In theory, he didn’t know Joyce was dead so wouldn’t going home have been the natural thing to do?

Also, when did Larry decide to kill Joyce? Right up until Joyce happened to tell Gordon that Nita tried to kill her, nothing about what happened made Larry any less likely to get caught. The Pittsfield Avenger was only a mildly conspicuous character and at this time of night, the odds of him being noticed sufficiently close to the apartment building downstairs weren’t very high. Without that, there was no physical evidence of the Pittsfield Avenger having shown up, in which case all the police would have to go on is the wife being shot with the same caliber bullet as the gun the husband owns and the husband having no alibi. It’s not like Larry could have told the police the Pittsfield Avenger came into their apartment and shot Joyce with a blank.

Incidentally, why did Joyce collapse from being shot with a blank? I would imagine thinking you’re getting shot is traumatic, but what would be the mechanism of collapsing unconscious? It’s hard to imagine that the illusion of having been shot would last more than a second or so. Instead of fainting, wouldn’t the normal reaction be to indignantly ask if this is some kind of sick joke?

Also, what was up with the weird note to the police made from cut-and-paste latters? Who sent that? The obvious person would be Julian, since he was the only person with a motive (other than Jessica). But it’s a bit weird that nothing came of it and it was only mentioned again once, in an off-hand way with no significance.

Curiously, the most glaring plot hole turned out to not be one: the teleprompted confession of Julian. It was very strange, watching the various people around the studio reacting as if Julian was actually confessing. And then Jessica asking Julian about how he got into Joyce’s apartment as if he had actually just confessed when clearly he hadn’t—his asking Gordon how his delivery was and saying he could do it better made it clear he thought of it as reading lines. But this does bring up the question of why, when Jessica asked him how he got into Joyce’s apartment, Julian confessed to what he thought was the murder at that point. Was he doing it to protect Nita? But why decide that in this moment rather than before (or after)?

Whatever the answer to that question, when Jessica revealed that Julian didn’t actually kill Joyce, it did make her part make more sense (she was only pretending that she thought it was a confession). Though, now that I say that, what was the point of the charade? Normally, this kind of charade is there to trick the real murderer into confessing. In this case, all it did was trick the real murderer into being accused and still denying it. Jessica could have done that anywhere, at any time.

And now that I say that, it occurs to me to ask why Larry Holleran was there at all. This was, so far as most people knew, a taping of an ordinary episode. Larry had no connection to the show whatever, now. So why was he there?

To be clear, it’s not that I expect the writers to completely rewrite the scene to be someplace else it makes more sense for Larry to be. What I expect is some kind of explanation given for why he’s there. It would only take a sentence. Something about him being there because this is Joyce’s last script and they want him to see it for her. It doesn’t need to be super-plausible, since they would be setting him up, it would just need to be awkward for him to say no to.

Also, the thing with Julian “confessing” really should have tied into Larry making some kind of slip-up. That’s the whole point of deliberately accusing the wrong person, after all—to trap them by making them so eager to blame the wrong person that they reveal something they shouldn’t. It serves no purpose to go through with it then say “nevermind” and proceed as if nothing had happened.

Well, that’s the plot. When it comes to characters, this episode is also a very mixed bag. On the one hand, it had some very vivid characters. Bibi Hartman and Bert Upton particularly come to mind. Carol the new writer was another stand-out character. This was more in moments and in no small part up to the actors, so it may not have come through in my summary, but they were interesting.

The problem was that none of the characters had a motive for anything that they did. Though I need to clarify that, since I mean it in the literary, rather than the detective, sense.

The detective sense of “motive” is an answer to “cui bono” (who benefits?). Plenty of people in this episode acted according to their rational self-interest in this episode, and so had motives in the dective sense.

The literary sense of “motive” is related, but focuses primarily on the goals of the character. Real life is indescribably complex; in every moment there are many things a person might be doing and in choosing one they are rejecting all of the others. Thus the goods which might be achieved by the other actions are being sacrificed to the good which may be achieved by the action actually chosen. This is part of the meaning of “you have to serve somebody.”

To do anything is to trade almost limitless potential for very limited actuality, and this choice intrinsically elevates the actuality chosen against the potentiality which has been left unchosen. If you spend an hour learning French, you are giving up spending that hour learning Latin, Greek, Chinese, Korean, Russian, Spanish, Japanese, or how to do an around-the-world loop with a yoyo. Every action may or may not achieve its goal, but it certainly does not achieve most possible goals. Every action is, whatever else it may be, an act of sacrifice. And every act of sacrifice enshrines a hierarchy of values. To learn the word for “teapot” in Russian is to declare to the world that, in this moment, it is better for you to learn the word “чайник” than to learn the words “茶壶”, “τσαγιέρα”, “주전자”, or “théière”. Also that you learning the word “чайник” in this moment is more important than having lunch, knitting a sweater, riding a motorcycle, or teaching your prize-winning flea to jump through a tiny hoop.

So, for any human being, to understand what they’re doing, one must know the answer to the question: what do they want? What they want does not merely explain what they do, but also—and sometimes more importantly—what they don’t do. Not just why did they do this, but why did they give that up?

This is the literary sense of motive, and this is what none of the characters had.

Oh well. Next week we’re in a university in Vermont in School for Scandal.

You must be logged in to post a comment.