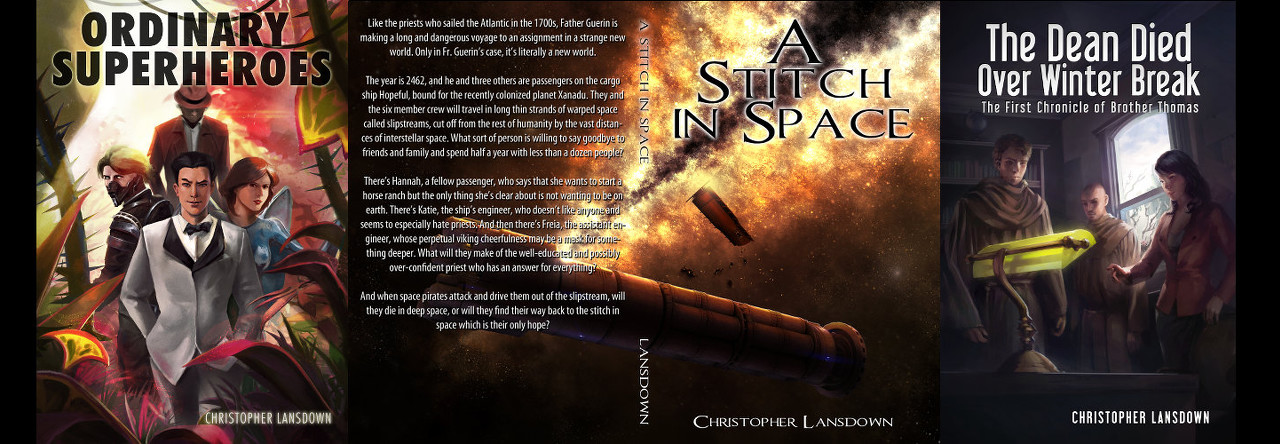

On the seventeenth day of March in the year of our Lord 1985 the eighteenth episode of the first season of Murder, She Wrote aired. Set just outside of Cabot Cove, it was titled Murder Takes the Bus. (Last week’s episode was Footnote to Murder.)

The episode actually begins with Jessica and Amos discussing their travel plans to some kind of meeting of the Maine Sheriff’s Association. Since the car isn’t working and they’ll have to take the bus, they’re likely to miss the hors d’oeuvres, which disappoints Amos greatly.

But they should be there in time for the drawing—they’re giving away a big screen TV—and Amos feels that it’s his lucky night. (At the time, a “big screen TV” would have been a large, heavy cathode ray tube TV whose screen measured around thirty inches, or perhaps a little bigger. There were projection televisions of the time that might measure up to sixty inches, but they were extremely uncommon, especially because they had pretty poor picture quality, even by the standards of the day.)

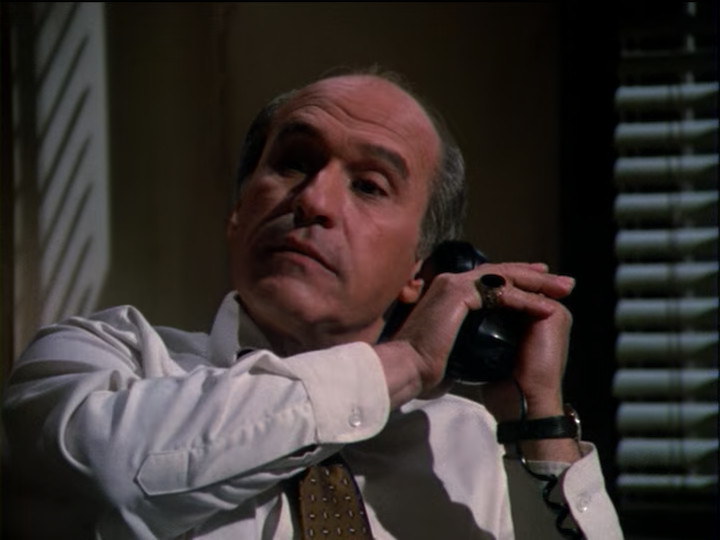

At the bus stop we meet a few characters. Here’s Cyrus Leffingwell. He’s got a thick Maine accent and likes local busses because you can sit back and enjoy yourself.

Also, from the smell of the air (and the occasional bit of thunder that we can hear) he predicts that it will be raining in twenty minutes.

A moment later the bus comes and people begin to board. Jessica is surprised to see a new bus driver, as a fellow named Andy Reardon normally runs this route. The bus driver explains that Andy has the flu.

There are not a great many people on the bus, but we get a look at a few of them.

This is Kent and Miriam Radford. Kent is a professor. Miriam recognizes Jessica—she’s a fan.

Sure enough, the storm overtakes the bus and it begins to rain hard before long.

Also, probably not entirely by coincidence, but unusual for Murder, She Wrote, the first shot we get of the bus driver’s face coincides with the guest star credit for the actor playing him.

As the bus makes its way through the stormy night, it comes up to the state prison, where a man who has been standing in the rain hails the bus. We know it’s the state prison because of an establishing shot of a helpful sign:

The man gets on looks around, noticing something that gives him pause.

He’s going to Portland and doesn’t have a ticket, but apparently on this bus line you can pay the fair in cash. Which he does. After receiving his change, he silently walks to an available seat and sits down.

Jessica notices the book he’s holding.

The original shot was very dark and I could barely make out the title, so I edited it to increase the exposure. It’s a well-worn copy of The Night the Hangman Sang. (So far as I can tell, that’s not a real book.)

A bit later, they run into an obstruction. A man in a yellow raincoat boards the bus for a moment to explain that powerlines are down and while they can get through, they need to be very careful. There is also a fair amount of flooding. The road is open, but the guy doesn’t know for how long it will remain so.

Quite unusually for Murder, She Wrote, we’re about five minutes into the episode and still getting the occasional credit. This is quite the slow opening, though the suspensful music helps by letting us know that it is going somewhere.

After a while of the bus continuing on its journey, Miriam gets up from her seat and sits in one behind Jessica and introduces herself. She’s a huge fan and tells Jessica that she’s in Miriam’s top ten most stolen list—Miriam is a librarian. They’ve had to replace Jessica’s books dozens of times over the years.

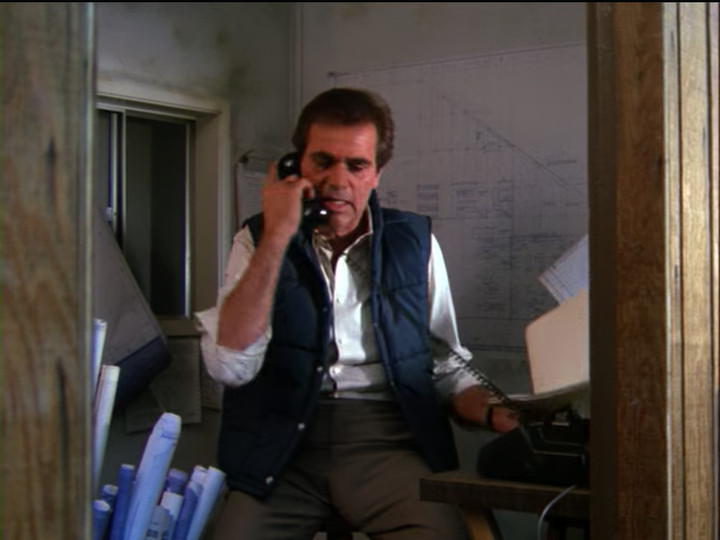

Some time later, a man who just got out of a broken-down car hails the bus. He gets on and inquires the fair to Portland.

The bus driver asks if he was the one following the bus for quite some time and he replies that he was—he thought it would be safer with the bus taking the brunt of the storm. He adds that he’s now sorry that he passed the bus and finds a seat.

As he puts his coat into the overhead compartment, he inadvertently reveals that he’s carrying a gun.

Jessica notices, and some sinister music plays.

Some time later, the bus pulls up to a diner. The bus driver calls back to the passengers that they seem to be having some engine trouble. They’re welcome to get out and stretch their legs while he checks it out.

As the passengers shuffle off the bus, Jessica notices the name of the bus driver.

Inside the diner, as the people from the bus file in, we get some characterization. The owner of the diner is surprised to see them—he heard on the radio that the road was closed—but friendly. The professor (Kent) says some extremely nerdy things which confirm his professorhood. There’s also a little bit of bickering, which helps to establish how much people would rather get to their destination than be inconvenienced.

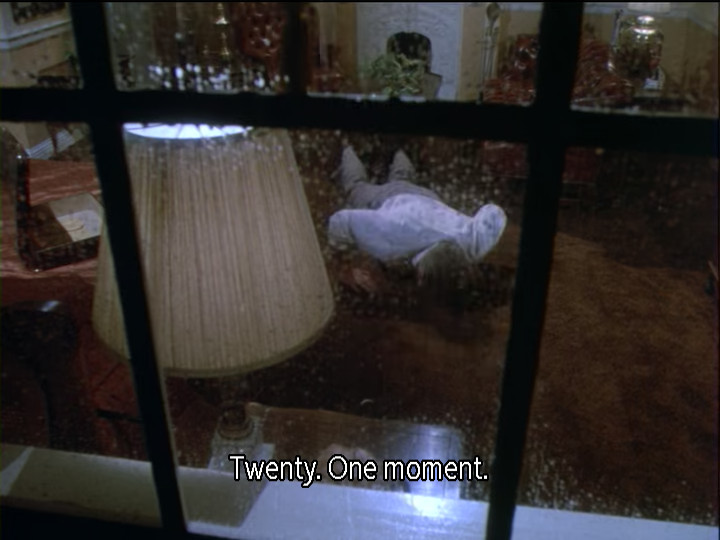

When Amos gets up to look at the menu, Jessica notices something in the bus out the window.

I’ve upped the brightness in the dark areas a bit, but even so, you can’t really tell who those people are. They do seem to be having a bit of an argument, though—there are some angry gestures.

A while later, after Jessica and Amos finished the pie that they ordered shortly after coming in, the bus driver comes in and says that they’re not leaving soon, he just needs to rest for a bit. Amos goes to a payphone outside to call Portland and let them know what’s up—it turns out Jessica is supposed to give a speech at the event—and Jessica goes out to the bus to get the book she was reading and forgot to bring in with her.

On the bus there is only the man who was picked up just outside of the prison, apparently asleep. When Jessica tries to wake him for some reason, his head lolls over and it turns out that he’s dead.

And on that bombshell, we fade to black and go to commercial.

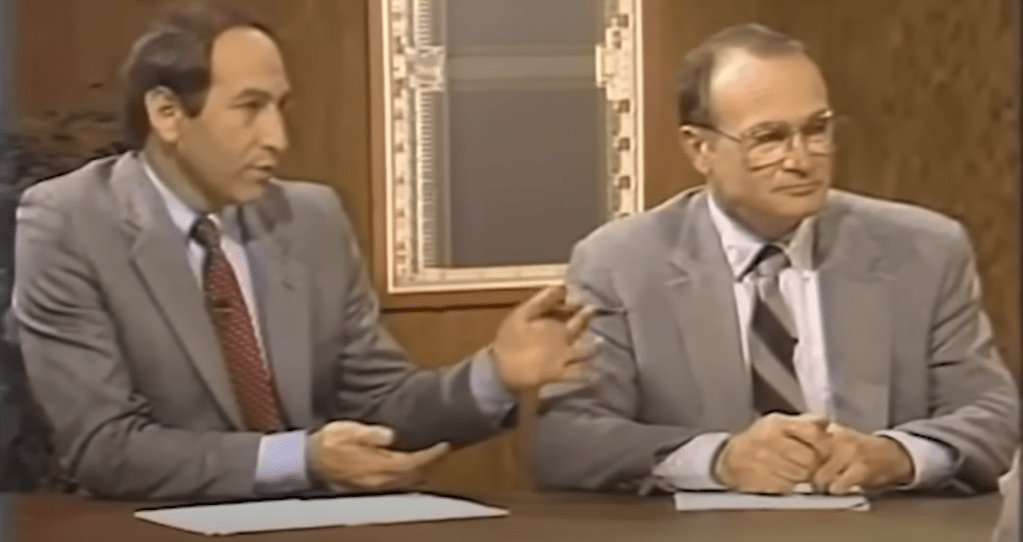

Had you been watching in 1985, you might have seen a commercial like this:

When we come back from commercial break, Jessica has brought Amos and they’re examining the body. He suggests notifying the bus driver and not moving anything until the coroner arrives. Jessica convinces Amos to at least do a little investigating, even though he’s out of his jurisdiction, because the killer had to be one of the people on the bus and it will be some time until the authorities arrive.

Amos consents and checks the corpse’s pockets, but there’s nothing in them.

Jessica remarks that it’s ironic that the man should be killed the very day he’s released from prison. I don’t see how it’s ironic in any way, but they had to work in that he was recently released from prison somehow. Anyway, Amos objects that he could have been a visitor or a weekend guard. Jessica doubts it, though. He’s wearing a new suit, he has on new shoes, and paid for his bus fair with crisp new bills.

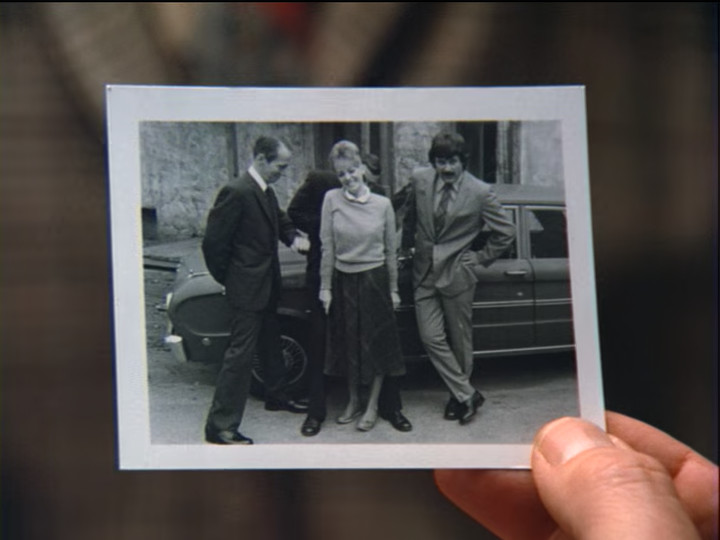

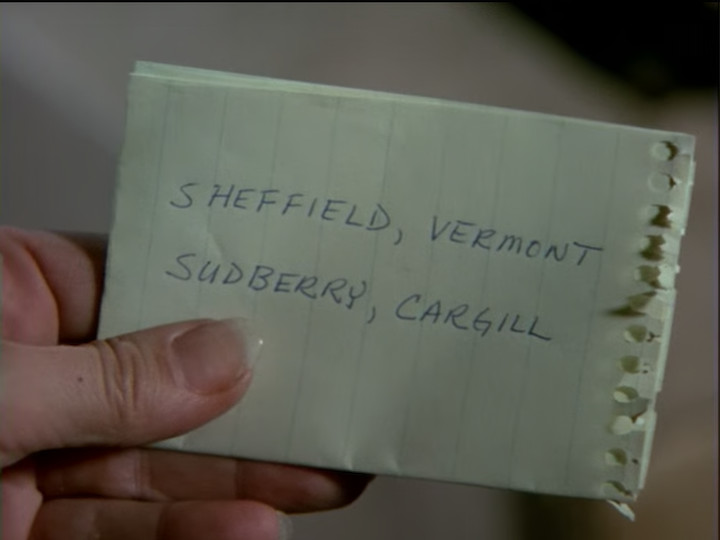

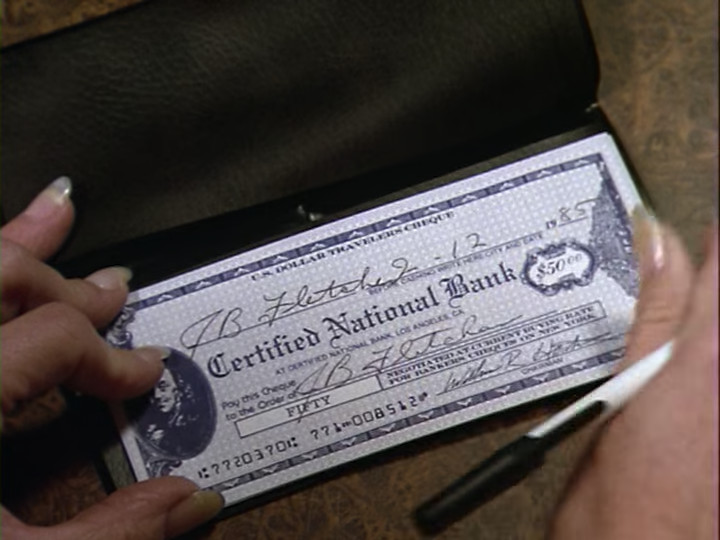

Looking around, they find his wallet on the floor. It contains the man’s release paper—his name turns out to be Gilbert Stoner—some money, an out-of-date driver’s license, and a photograph. Jessica concludes that someone was looking for something. Then she notices that Gilbert’s suitcase is missing.

She then looks down at the body and in a flash of lightning she notices some smudge marks on his neck and on the collar of his shirt.

Just then Miriam comes onto the bus to get a book. She then sees the corpse, screams, and nearly faints.

The scene then shifts to some time later with Kent comforting his wife and her crying about how awful it was. Cyrus then walks in and says that he tried to call the police but the phone line appears to be dead.

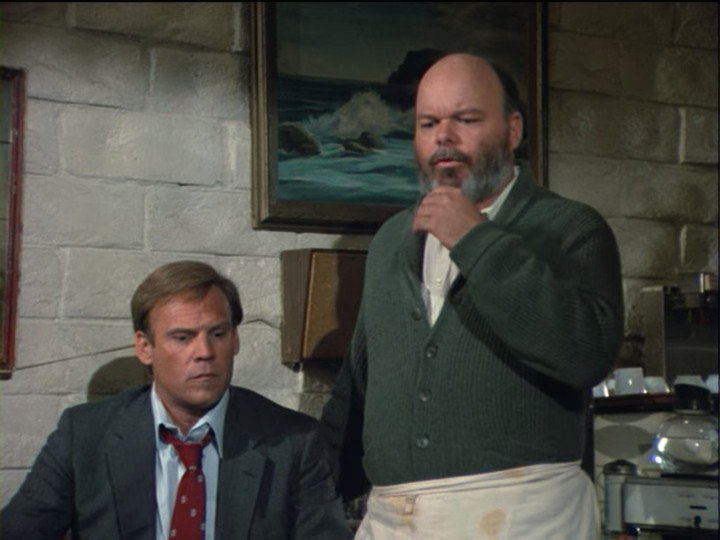

The owner of the store brings out some coffee for everyone and tells them that it’s on the house (an expression meaning that the store is paying for and there’s no charge to the people receiving it).

Amos then gets up and introduces himself. While he has no jurisdiction here, he has an obligation to assume authority until the local police arrive, and he hopes that they will cooperate.

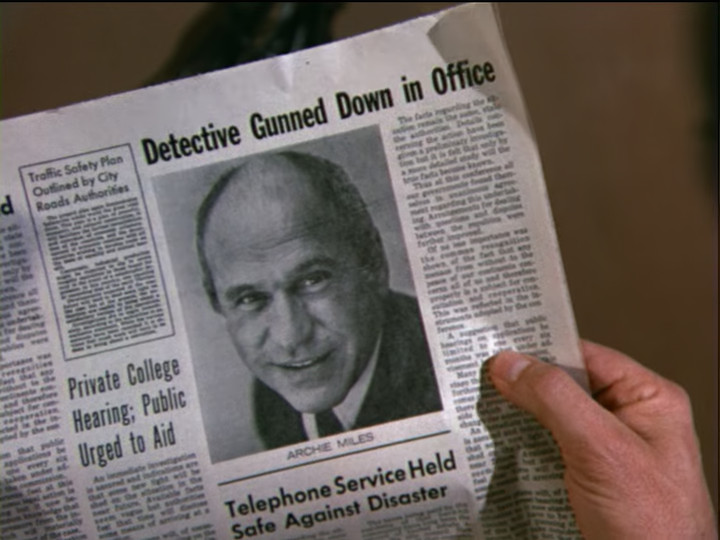

Jessica then remembers where she heard the name “Gilbert Stoner” before. It was during some research she did for a book. He was involved in a robbery in a bank in Augusta. (Augusta is a town in Maine, about fifty miles north-east of Portland.) This rings a bell for Amos—the Danvers Trust Company.

The owner of the diner speaks up, saying that he remembers that being all over the TV for weeks…

…about fifteen years ago.

Kent then rattles off some information about it. Three men pulled it off but were apprehended. Cyrus concurs, though he says, “at least one of them was.”

At Jessica’s prompting, Amos then asks for everyone’s names, why they were on the bus, and where they were at the time of the killing. There is some grumbling at this and someone remarks that, “Obviously, he thinks that one of us killed him.”

Amos replies, “I think ‘obvious’ is the right word, sir. Unless, of course, this Stoner fellow somehow managed to reach up behind his head and stab himself in the back of the neck with a 10-inch screwdriver.”

Amos sometimes has a way with words.

Kent and Miriam introduce themselves—he’s an associate professor of Mathematics and she’s a college librarian (the head librarian, she points out). They’re on their way to Boston to do some research. Kent says that he was in the “video alcove” playing “Road Hog.”

Cyrus says that Kent is telling the truth—he heard Kent playing the game while he (Cyrus) was in the gift shop. Why a diner would have a gift shop, no one says. Cyrus mentions that he’s from Woonsocket, Rhode Island, is a retired mailman, and has no idea who the poor dead fellow is.

We then meet a young couple who have been on the bus and occasionally bickered in terms sufficiently suspicious-sounding that I was immediately convinced that they’re red herrings.

He’s Steve Pascal and the woman is his wife. Her name is Jane. He’s a computer engineer and they’re on their way to Portland. She was inside the whole time and he was outside trying to use the public phone. He couldn’t get through and eventually the line went dead.

Jessica interrupts to say that she saw him through the window having a heated discussion with Stoner on the bus. Pascal replies that it wasn’t heated at all—they just exchanged a few words, no more.

We then meet Joe Downing.

He’s captain of the fishing trawler MarySue, out of Gloucester. (Somebody had fun with the names, here.) He’s going back to his boat after having visited family, and like Cyrus, had never heard of Stoner before. He was in the bar, having a drink. (Earlier, he asked the owner of the diner if it was possible to get a drink and the diner owner said yes, but he’d need a few minutes to open the bar. This diner has a remarkable number of amenities.)

We then meet the guy who got on the bus after his car broke down. His name is Carey Drayson. He was in the men’s room drying off his clothes on the radiator. He adds that if his car hadn’t skidded off of the road, he wouldn’t have been there.

Jessica asks why he’s carrying a gun and in response he shows Amos his permit to carry a concealed weapon. He’s a jewelry salesman and needs to protect himself since he carries valuable jewels in the case he keeps with him.

The Sheriff then asks the bus driver about the screwdriver. He replies that he left the toolbox open in the front of the bus and anybody could have taken the screwdriver out. He was working on the engine the entire time so he wouldn’t have seen. He thought he heard some people get on and off the bus, and he heard some raised voices, but he didn’t pay attention.

Jessica then questions Steve Pascal. She says that he was lying about his conversation with the victim being peaceful. She further says that his resemblance to one of the people in the photograph that the victim was carrying is probably more than coincidental.

Without saying anything Steve gets up and takes a look at the photo.

He looks for a bit, then says that he doesn’t have to answer Jessica’s questions, or anybody else’s either and walks off.

Jane (his wife) comes and looks at the photo. She protests that she knows that Steve didn’t kill Stoner. Amos asks who the man in the photograph is—he doesn’t specify which of the three he means—and she replies that “he” was Steve’s father. He was killed in the Danvers robbery along with an innocent bystander. The innocent bystander was a woman, but she doesn’t know more than that. Stoner and the other man got away, but they caught stoner three days later. They never caught the other man and never recovered the money from the robbery.

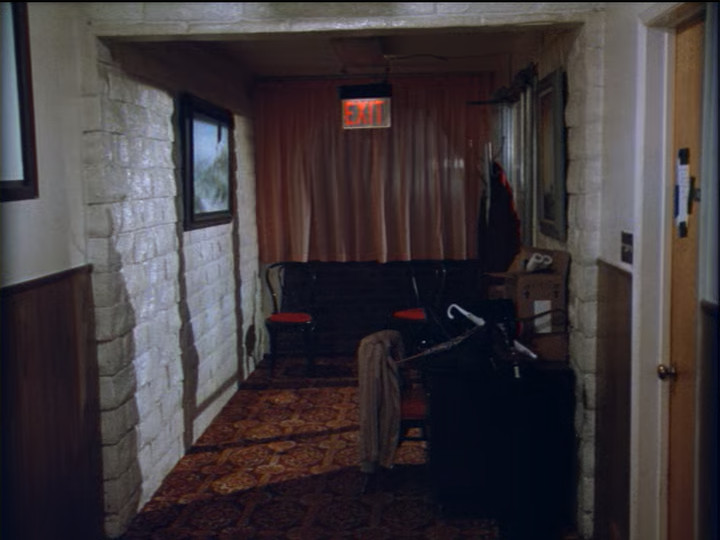

Jessica goes to investigate and we get some shots of various parts of the diner.

Jessica ascertains that the Road Hog video game makes plenty of noises as if one is playing even while no one is there—that was fairly common for arcade games of the time.

We also see a bit of what I assume is the gift shop:

Down at the end of the hallway is a door leading to the outside:

Amos counts it up and nearly every area anyone was in at the time of the killing has a door to the outside (the bar and kitchen do as well). Which means that anyone could have done it. They then decide to check outside.

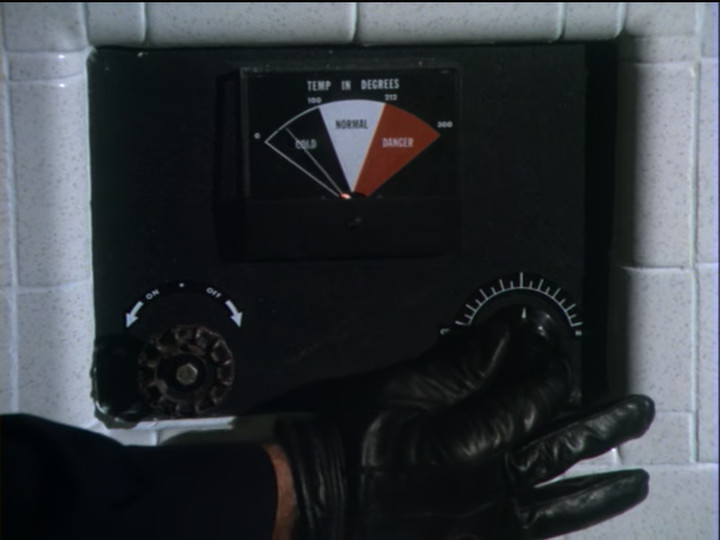

In the bus, Amos notices a light on that concerns him. It suggests that a “damper switch” is on. (Amos mentions that he worked as a bus driver for a summer before he joined the police force.) Jessica then goes around checking the doors and finds that the door to the kitchen is unlocked. She checks the next door (the one to the hallway) but before she can open it she notices some clothing on the ground. As she investigates the door open and Steve is there, glaring at her and looking as ominous and menacing as humanly possible.

And on that bombshell, we fade to black and go to commercial.

When we get back from commercial Steve says that he wanted to talk to Jessica and she replies that she thought he might. He apologizes for losing his temper but he didn’t kill Stoner. She doesn’t acknowledge this but instead asks him to help her get the suitcase inside—it is Stoner’s, and getting wetter by the minute.

Inside, she and Amos inspect the clothing while Steve and his wife watch. After they don’t find anything, Jessica asks what the argument was about.

Steve said that the bank robbery ruined his life—he was in junior high when his father died and from that moment on he was the son of a thief—and he took the bus because he wanted to meet Stoner and demand his father’s share of the money. But when he met Stoner, he found that he was a wreck of a man. The robbery destroyed Stoner’s life as it had Steve’s father’s, and he (Steve) decided then and there that he wasn’t going to let it destroy his, so he just walked away.

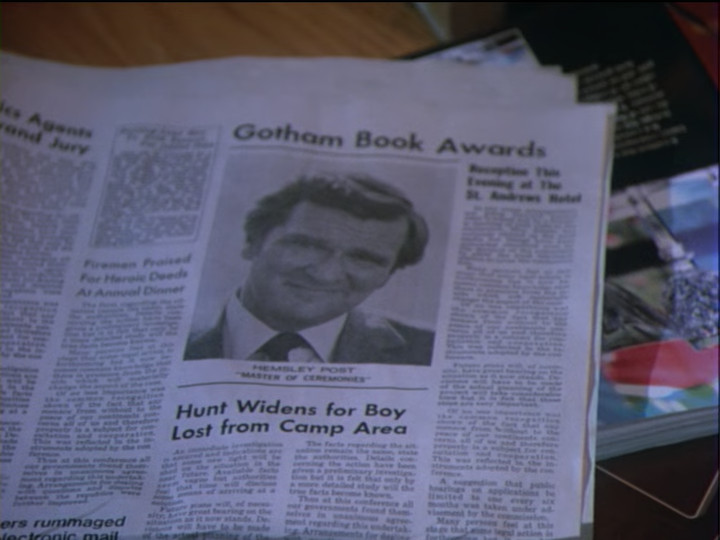

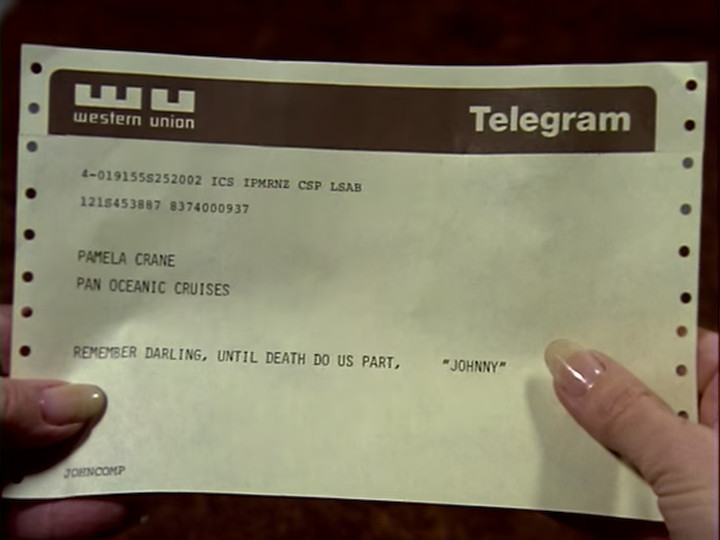

Jessica asks how Steve knew that Stoner would be released today. In reply, Steve pulls out the newspaper clipping that announced it. Amos reads the clipping aloud, as it gives some more details. The innocent bystander who was killed was Julie Gibbons, who was 16.

The coincidence of the girl’s last name and the bus driver’s last name is not lost on anyone. And Amos tells Jessica that he had figured out who did it half an hour ago—presumably a reference to what he found out when he investigated the bus.

Back in the main part of the diner, Amos makes a citizen’s arrest of Ben Gibbons. He explains that he noticed that the damper switch was thrown—and explains that the damper switch is to be used only in an emergency of the engine running away. Once it is thrown, the engine cannot be restarted until the damper switch is reset by hand. The damper switch reset is way in the back of the bus and cannot be reached except by some kind of tool like a very long screwdriver. Which Amos takes to mean that the bus driver needed to take the screwdriver out himself and so no one else took it because he had it the whole time.

There are some flaws in this logic. While the damper switch being thrown does suggest that Ben threw it in order to waylay the bus, if the damper switch had not yet been reset by the time Amos inspected it, that means that Ben did not reset the damper switch and so there was no reason to conclude that he must have had the screwdriver. Also, Ben wearing a rain coat suggests that he was working outside the bus, and Amos seemed to go outside when he saw the damper switch light and excused himself to go look at something. So to murder Stoner inside the bus, Ben would have had to take out the long screwdriver then go inside the bus to murder Stoner then leave the screwdriver there for some reason. All quite possible, but none of that is an obvious conclusion from Ben having sabotaged the bus.

Anyway, Jessica interrupts to ask Ben a question about the Danvers case—she points out the last name of the girl who was killed. He admits that Julie Gibbons was his daughter. He dreamed about revenge every day since she died. When he heard about Stoner’s release he switched routes with the regular bus driver and did fake the breakdown. He worked on the damper until Stoner was alone. Then when he went back in the bus, Stoner was sleeping like a baby. This enraged him so much that he stabbed Stoner in the neck with the screwdriver.

When Cyrus says thanks God that this ordeal is over, Jessica gives him the bad news that it isn’t. Ben may be convinced that he killed Stoner, but Stoner wasn’t sleeping when Ben stabbed him. He was already dead. There was very little blood on the screwdriver and around the wound because he had been dead at least fifteen or twenty minutes already and the blood had begun to settle in the lower parts of the body. She’s convinced that the coroner’s report will show that Stoner died of strangulation.

After Amos goes outside to try the pay phone again (the line is still out) the Diner owner remembers that his son has a CB radio in the back room. He has no idea how to use it but if anyone here does, they’re welcome to try. Carey Drayson, the jewel seller, says that he knows. He, Amos, and the owner of the diner go off to try. Jessica notices that Carey left his briefcase on the table.

Some time later, when Carey is alone in the room trying to hale someone on the CB, Jessica comes in and remarks that he’s awfully careless with his jewels, if indeed there are any in his briefcase, which she doubts. When she asks if Sheriff Tupper can take a look in it, he says not to bother and hands her his real business card.

He’s an investigator for the company which insured the Danvers Trust robbery. He was assigned to follow Stoner in the hopes of being led to the money. That’s been made more difficult, but he holds out hope that if they find the killer it might lead to the money. Jessica, however, isn’t so sure that it’s that simple.

Back in the main room Jessica and Amos discuss the case over coffee. Clearly, somebody was looking for something in Stoner’s briefcase, but did they find it? And where was the overcoat and the book? Why weren’t they with the suitcase?

On a hunch, Jessica says that they need to go back to the bus. There, Jessica realizes that Stoner’s body isn’t in the seat he was sitting in on the trip. He had been sitting several rows back. In that seat, Jessica finds the overcoat and the book.

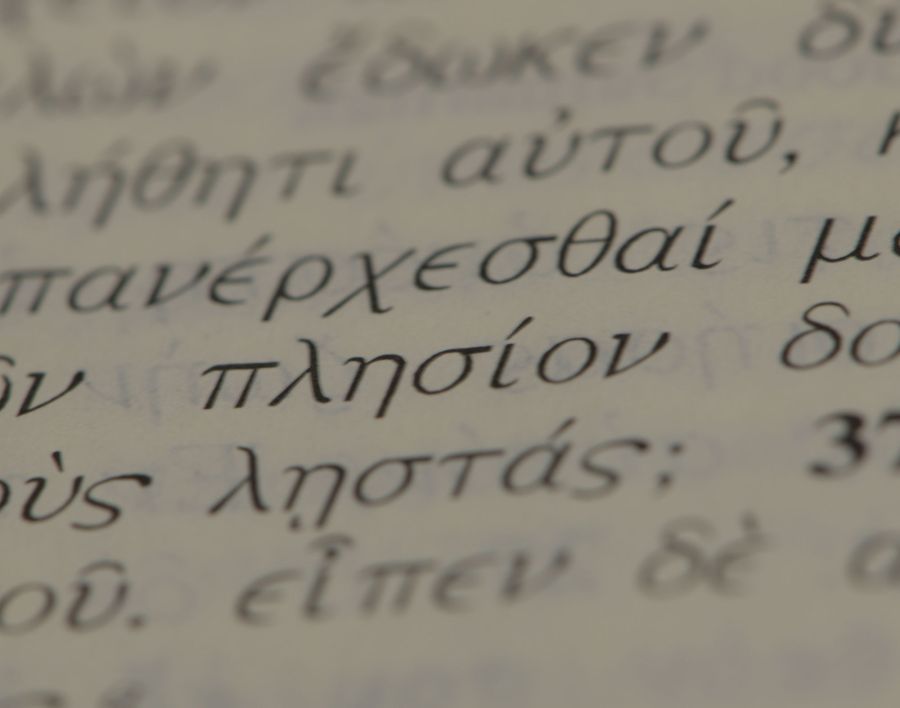

Back inside, Jessica examines the book. She finds it very strange that while the dust jacket is in tatters, some of the pages aren’t even cut. (Books printed in print runs, as all of the books back in the 1980s were, use extremely large sheets of paper that are then folded up into signatures and cut. This cutting process is occasionally imprecise and leaves a folded edge intact, requiring the reader to cut it himself. By the 1980s this kind of manufacturing defect was rare, but not unheard of. I can recall having to cut a page, once.)

The power then fails. The owner of the diner tells everyone to not worry—he has a generator out back. He and Amos go together to get it started. In the dark, someone leaves the room but we can’t see who. Moments later, a shot rings out and Jessica says that it came from the office where Mr. Drayson is. The power comes back on as she gets to the office. As Amos arrives, we see Jessica examining a wound in Mr. Drayson’s arm.

As others come in, the diner owner notices that someone smashed up the CB radio.

Jessica adds that whoever it is now has the gun. And on that bombshell, we fade to black and go to commercial.

When we come back, Amos searches each person but no one has the gun.

As Jessica is bandaging up Casey, Captain Downing takes over the work when Casey complains of pain, explaining that a sailor needs to know how to care for himself and his mates, since when you’re at sea you’re an island unto yourself, so to speak. Jessica admires his work. I can’t help but think that this means that he’s the culprit and gave himself away by tying a landlubber’s knot rather than a seaman’s knot, or something like that, but Jessica doesn’t say.

She then notices that Stoner’s book has disappeared. After a bit of discussion, Jessica accuses Miriam of stealing it because it was rare and she knew its value. (Miriam has made small talk more than once about how little money she and her husband have.) Insulted, Kent dumps Miriam’s knitting bag out on the table to prove Jessica wrong, only to prove her right.

Miriam took it because it’s extremely rare and worth nearly $2,000. It would be worth more but the dust jacket and binding are in terrible condition.

Jessica finds the part about the binding interesting because Stoner clearly didn’t buy the book to read it. She examines the binding and finds that a safe deposit key had been stashed in it.

Jessica then asks Captain Downing if that’s what he had been looking for. She then adds, “Or should I say Mr. Downing, or whatever your name really is. I think you can drop the pretense of being a sailor. A real sailor would have tied a square not, not a granny, as you did.”

(Square knots and granny knots are very similar, but the square knot reverses the direction of the second wrap-over from the first and results in a more secure knot.)

Captain Downing then pulls the gun out of Amos’ overcoat—Amos exclaims at this and Captain Downing replies that he figured Amos wouldn’t look in his own pocket. A gust of wind blows open the door, distracting Downing, and Amos and Steve, working together, manage to overpower him.

When the situation is resolved, Downing exclaims that they won’t be able to pin Stoner’s murder on him. Stoner was already dead when he searched his things for the key. He admits to being the third partner, but Stoner double-crossed him and hid the money. He protests that it is absurd to think that he killed Stoner under these circumstances, though, when he’s stuck here like a rat in a cage. All the authorities needed to do was find out who he was and his motive would put him away.

Jessica then figures it out. She says that Downing is telling the truth and Amos was right all along. It was Ben Gibbons who killed Stoner. She thinks he didn’t mean to kill Stoner, but it can be proved. There were grease marks on Stoner’s collar—which never would have been there if Ben had merely stabbed Stoner, as he said.

Ben sits down and confesses. He hadn’t originally meant to kill Stoner. He just wanted him to know how much hurt he had caused. But Stoner was cold. He said he didn’t care about some dumb kid that got in the way and he’d done his time and there was nothing anybody could do. This enraged Ben so much he grabbed Stoner by the neck and didn’t let go until Stoner was dead. When the rage passed he realized what he had done and that he was no better than Stoner had been. When he saw the Captain get on the bus he figured he was a goner, but to his amazement the captain only rifled through Stoner’s things and stole his suitcase. After a few minutes of wondering what to do, he realized that he needed to stab Stoner with the screwdriver. The coroner would figure out that wasn’t how Stoner died, so that was the only way to escape, since the police would surely look into people’s backgrounds and prior relationships.

The next day, in better weather, the local police take Ben into custody. Cyrus Leffingwell remarks to Jessica that he feels sorry for Ben. Jessica concurs, saying that a good lawyer may be able to make the case of temporary insanity, and that perhaps it would be justified. Leffingwell asks if she and Sheriff Tupper will be joining them on the bus but she informs him that they’re going back to Cabot Cove so he bids her a fond farewell and she says that the pleasure of their acquaintance was all hers.

Amos then comes up and fills her in on what they missed in Portland. When Jessica didn’t show up one of the Sheirffs who loves the sound of his own voice ad-libbed a speech for over an hour. And he knew that they should have been there for the drawing for the big-screen TV. When Jessica tells him that she’s sorry for him, but he’ll survive without it, he replies that it wasn’t his name which came up, it was hers.

And on Jessica’s reaction to that we go to credits.

I really liked this episode. I mean, how do you not love a mystery set on a dark and stormy night?

Actually, it’s not that hard, given that plenty of bad mysteries have been set on dark and stormy nights, but none the less it is a great element to a story. And the broken down bus at the diner really cements the isolation and gives us the fun of a very limited cast of characters and short windows of opportunity. It even has a minor flavor of Murder on the Orient Express to it, in how many characters turn out to be related to the dead man.

The downside to the great setting with the tight constraints that really increase the intrigue is that it makes the writer’s job much harder, and they were at the limits of their ability. For example, why did the bus driver wait until Stoner was alone? There was no great likelihood of him ever being alone. It was established that Stoner was afraid of his former partner and the best way to avoid being alone with his former partner was to avoid being alone. Now, there was no way for the bus driver to know that Stoner’s former partner would be on the bus, but people in storms don’t usually try to isolate themselves.

I do think that this can be worked out, though. If the bus driver had done research and found out that this diner was the world’s largest diner with a maze of rooms, after enough hours waiting it would have been reasonable for him to take breaks from working on the engine and people will eventually find some way to entertain themselves, so he could probably have eventually found a way to get at Stoner that at least wasn’t too likely to be overheard, even if just because everyone had drifted to different places and nowhere had more than a few people in it. Which should have been sufficient for his purposes, if he really only wanted to tell Stoner how much pain he had caused and wasn’t originally planning to kill him.

But why did Stoner remain alone on the bus? He had no reason to and significant motivation to not do that. Speaking of people who probably shouldn’t have been on the bus, why did Steve bring his heavily pregnant wife on the bus to confront Stoner? Also, why did he wait until the bus broke down? He’d have had no way to know that the bus would brake down and it would be far more natural to go sit next to Stoner shortly after he got on the bus. That would have prevented Stoner from getting away, while waiting for a bus station would have made it easy for Stoner to refuse to talk to Steve.

The safe deposit box key is also a problem. Safe deposit boxes require the regular payment of a fee to maintain them. There are grace periods and such, but there’s no way that Stoner was able to pay them from prison for fifteen years. Among other things, if he tried, the authorities would have found out about the safe deposit box and issued a warrant for it. And while there are grace periods for abandoned safe deposit boxes, after fifteen years the contents of the box would have been long-ago escheated to the state. Even before that, the bank would have opened and inventoried the abandoned safe deposit box. Since that would have been only a year or so after a notorious bank robbery, there’s a good chance they’d take a look for obvious things like consecutive serial numbers and contacted the police to check. Banks are required to report transactions over $10,000, so the discovery of $500,000 in cash would certainly raise a few eyebrows. This last part is pretty fixable, though—instead of a key to a safe deposit box Jessica could have found a map to where the money was wrapped in several layers of sealed plastic bags and buried in a chest. That would have been a lot more fun, too.

Which brings me to the question of who killed Stoner. I think that it was a pity that it turned out that the bus driver actually killed Stoner. It would have been more fun if it had been the Captain. A simple revenge killing isn’t properly the subject of a murder mystery. A proper murder mystery is based on the misuse of reason towards some end that should be thwarted. (Revenge for a killing that the criminal justice system will never address is enough of a grey area to make it less fun.) Had the captain been the murderer, it would have been more fitting in this regard. And despite the captain’s protestations, it would not have been stupid to have killed Stoner at the diner. No one knew that there was any connection between them—that’s the whole reason that the captain was never caught. He could also have had a double-motive: he could have been reasonably prosperous and afraid of Stoner blackmailing him. The statute of limitations would have been up but it coming out that he had been part of a bank robbery gang that got an innocent girl killed would have cost him quite a lot—respectable people would have wanted nothing to do with him. Some people will do a lot to avoid losing social status.

One final nit I have to pick is the question of how did everyone know that Stoner would take this bus? They established that it was made public when Stoner would be released, but in 1985 it would not have been easy to find out that the only thing someone released from that prison can do is to take the bus and that there’s only one bus which comes through in the evening. Which is, itself, a bit odd, since prison releases usually happen in the morning and one could reasonably expect some kind of regular transportation to and from the prison for staff and visitors. Those would mostly be local busses, of course, so this could probably be fixed by having people in the know aware that Stoner needed to get to Portland as fast as possible and so would wait for the one bus coming through that would take him there. I do understand why, for brevity, they didn’t address this—I like to describe Murder, She Wrote as a sketch of a murder mystery—but even under the best of conditions it is a bit of a problem.

Speaking of it being a sketch of a murder mystery, they never explained Stoner’s relationship to Julie Gibbons’ death. Jane describes it as, “[Steve’s father] was killed during the Danvers robbery. Along with an innocent bystander. A woman.” The newspaper article that talks about Stoner’s release says, “During the thieves’ escape attempt, an innocent bystander, Julie Gibbons, 16, was killed, along with one of the criminals, Everett Pascal.” They’re both rather conspicuously in the passive voice, but it sounds more like Julie was shot by the police when they were shooting at the robbers, not like the robbers killed her. Which would still make the robbers morally responsible for her death, but probably wouldn’t make them responsible for it in their eyes, making Stoner’s provocative response unlikely. “Hey, I’m sorry about your daughter’s death, but I wasn’t the one who shot her—the people who shot her were shooting at me, and I really wish she hadn’t been near us. She seemed like a good kid.” That kind of thing can go a long way to making an angry father less dangerous, and Stoner certainly gave the impression of a coward. Plus, had he actually directly killed the girl during an armed bank robbery, he probably would not have gotten out of prison after just fifteen years.

Setting the plot aside, there were a number of good characters in this episode. Cyrus Leffingwell was a lot of fun. It’s always nice to have an imperturbable character with sense in a murder mystery (other than the detective). Steve was played a bit too angry for my taste, but I very much liked his character arc. Carey Drayson had the beginnings of a good character, though after establishing him the episode mostly just uses him as a plot point and nothing more. The characters of Kent and Miriam were also interesting—they were big characters full of personality, but who had nothing to do with the murder. It’s helpful to have some counterpoint characters in a story. It’s both good for the story and also serves the practical point of not making the murderer obvious by being the only character. Of course, the temptation for the writer is often in the opposite direction—of making the murderer barely a character at all. Which is closer to what we got here—Ben Gibbons didn’t have much of a personality, though Michael Constantine did convey a lot of anguish non-verbally.

Next week we’re in Texas for Armed Response.

You must be logged in to post a comment.